DeepSeek It has become one of the most talked-about topics of 2025. Even for those already familiar with LLMs (Large Language Models), there is still much to discover about the Chinese team's proposal and, especially, How to apply today in projects of No Code and AI, without complicating things.

Contents

Quick summary: DeepSeek offers a family of open-source models (7B/67B parameters) licensed for research, a specialized code generation arm (DeepSeek Coder), and an advanced reasoning variant (DeepSeek-R1) that rivals heavyweights like GPT-40 in logic and mathematics. Throughout this article you will discover what is it?, how to use, why does it matter and opportunities in Brazil.

What is DeepSeek?

In essence, DeepSeek is a LLM open-source (for community research) developed by DeepSeek-AI, an Asian laboratory focused on applied research. Initially launched with 7 billion and 67 billion parameters (7B/67B), the project gained notoriety by releasing complete checkpoints on GitHub, allowing the community to:

- Download Weights are free of charge for research purposes;

- Do fine-tuning Local or cloud-based;

- Incorporate The model in applications, autonomous agents, and chatbots.

This puts it on the same level as initiatives that prioritize transparency, such as LLaMA 3 From Meta. If you are not yet familiar with the concepts of parameters and training, check out our internal article. “"What is an LLM and why is it changing everything?"” to get your bearings.

The innovation of DeepSeek LLM Open-Source

DeepSeek's distinguishing feature isn't just its open-source code. The team has published a pre-training process in 2 trillion tokens and adopted techniques of curriculum learning which prioritize higher quality tokens in the final stages. This resulted in:

- Lower perplexity equivalent models with 70 B parameters;

- Competitive performance in reasoning benchmarks (MMLU, GSM8K);

- More permissive license which rivals Apache 2.0.

For technical details, see the paper official on arXiv and the repository DeepSeek-LLM on GitHub

DeepSeek-R1: The leap into advanced reasoning.

A few months after its launch, the DeepSeek-R1, a “refined” version with reinforcement learning from chain‑of‑thought (RL-CoT). In independent assessments, R1 reaches 87 % accuracy in basic math test, surpassing names like PaLM 2-Large.

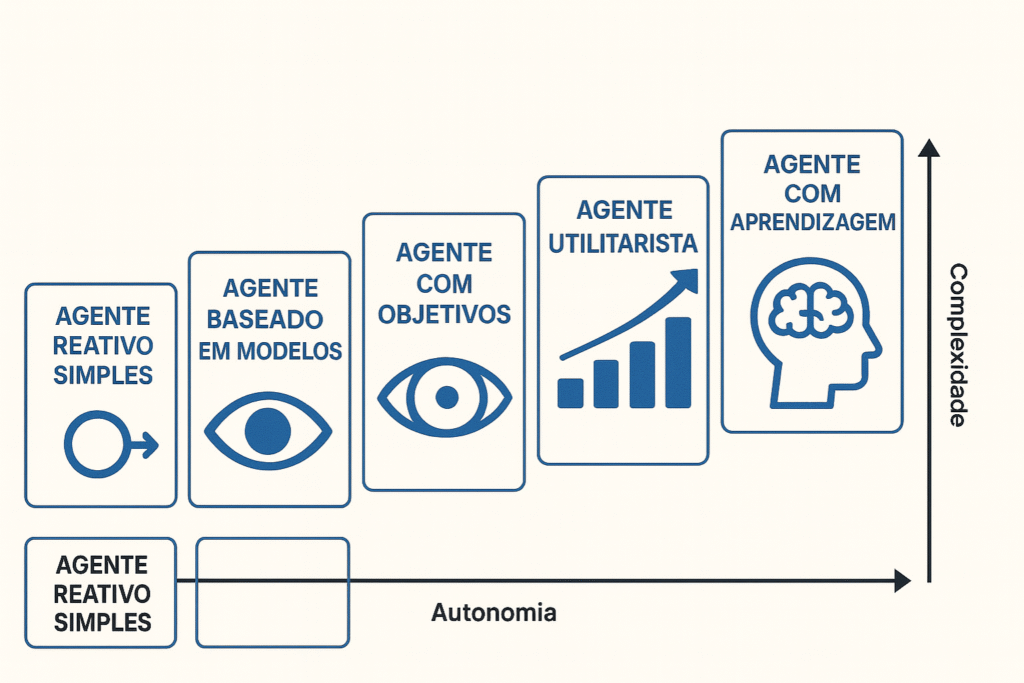

This enhancement positions DeepSeek-R1 as an ideal candidate for tasks that require structured logic, planning and step-by-step explanation common requirements in expert chatbots, study assistants and autonomous agents AI.

If you'd like to create something similar, it's worth taking a look at our... AI Agent and Automation Manager Training, where we show how to orchestrate LLMs with tools such as LangChain and n8n.

DeepSeek Coder: Code generation and comprehension

In addition to the general language model, the laboratory launched the DeepSeek Coder, trained in 2 trillion tokens from GitHub repositories. The result? A specialized LLM capable of:

- Complete functions in multiple languages;

- Explaining legacy code snippets in natural language;

- Generate unit tests automatically.

For teams freelancer and B2B agencies For those who provide automation services, this means increasing productivity without inflating costs. Want a practical way to integrate DeepSeek Coder into your workflows? Learn more in the course. Xano for Scalable Backends We demonstrate how to connect an external LLM to the build pipeline and generate intelligent endpoints.

How to use DeepSeek in practice

Even if you're not a machine learning engineer, there are accessible ways to try DeepSeek today.

1. Via Hugging Face Hub

The community has already mirrored the artifacts in Hugging Face, allowing free inference for a limited time. All you need is an HF token to run local transformer calls.

Tip: If the model doesn't fit on your GPU, use 4-bit quantization with Bits and Bytes to reduce memory.

2. No-Code Integration with n8n or Make

Visual automation tools such as n8n and make up They allow HTTP calls in just a few clicks. Create one. workflow what:

- Receives input from Webflow or Typeform forms;

- Send the text to the DeepSeek endpoint hosted in the company's own cloud;

- Returns the translated response in Brazilian Portuguese and sends it to the user via email.

This approach eliminates the need for a dedicated backend and is perfect for founders who want to validate an idea without investing heavily in infrastructure.

3. Plugins with FlutterFlow and WeWeb

If the goal is a polished front-end, you can embed DeepSeek in FlutterFlow or WebWeb using HTTP Request actions. In the advanced module of FlutterFlow Course We explain step-by-step how to protect your API key in Firebase Functions and avoid public exposure.

DeepSeek in Brazil: landscape, community, and challenges

The adoption of open-source LLMs here is growing at an accelerated pace. Research groups at USP and UFPR are already testing DeepSeek for... abstracts of academic articles in Portuguese. Furthermore, the group DeepSeek-BR on Discord, there are over 3,000 members exchanging fine-tuning tips focused on... Brazilian jurisprudence.

Curiosity: Since March 2025, AWS São Paulo has been offering g5.12xlarge instances at a promotional price, enabling fine-tuning of DeepSeek-7B for less than R$ 200 in three hours.

Real-world use cases

- E-commerce niche market using DeepSeek-Coder to generate batch product descriptions;

- SaaS legal which runs RAG (Retrieval-Augmented Generation) on summaries of the STF (Supreme Federal Court);

- Support chatbot Internal consultant at CLT (Consolidation of Labor Laws) companies for HR-related questions.

For a practical overview of RAG, read our guide. “"What is RAG – IA Dictionary"”.

Strengths and limitations of DeepSeek

Advantages

Zero cost for research and prototyping.

One of the biggest advantages of DeepSeek is its open license for academic and research use. This means you can download, test, and adapt the model without paying royalties or relying on commercial vendors. Ideal for early-stage startups and independent researchers.

Lean models that run locally

With versions featuring 7 billion parameters, DeepSeek can run on more affordable GPUs, such as the RTX 3090, or even via 4-bit cloud quantization. This broadens access to developers who lack robust infrastructure.

Active and contributing community

Since its launch, DeepSeek has accumulated thousands of forks and issues on GitHub. The community has been publishing... notebooks, fine-tunings and prompts Optimized for different tasks, accelerating collective learning and application in real-world cases.

Limitations

- License research-only still prevents direct commercial use;

- There is no official support for Brazilian Portuguese at this time;

- Hardware with 16 GB of VRAM is required for comfortable inference.

Next steps: learning and building with DeepSeek

Next steps: learning and building with DeepSeek

Understanding what you have learned

If you've followed this article this far, you already have a broad overview of the DeepSeek ecosystem. You know the different models in the family, their differentiating factors compared to other LLMs, and you have clear paths for practical application, even without a technical background.

Consolidating the main concepts

DeepSeek: What is it?

This is an open-source LLM program with different variants (7B/67B parameters), available for research and experimentation. It has gained prominence for its combination of openness, quality of training, and focus on specializations such as coding and reasoning.

The main innovation

Their pre-training approach with 2 trillion tokens and strategies like curriculum learning allowed even the 7B model to approach the performance of larger and more expensive alternatives.

How to use DeepSeek

From direct API calls to automated flows via Make, n8n, or front-end tools like WeWeb and FlutterFlow, documentation and community help accelerate this curve.

Opportunities in Brazil

The DeepSeek community is rapidly consolidating here, with real-world applications in academic research, SaaS, e-commerces, and teams seeking productivity through AI.

Moving forward with expert support.

If you want to accelerate your journey with AI and NoCode, the NoCode Start Up It offers robust training programs focused on real-world application.

In SaaS IA NoCode Training, Here, you'll learn how to use LLMs like DeepSeek to create real products, sell them, and scale them with financial freedom.